Nasa Tournament Lab - Robonaut Challenge: 2nd and 1st place

Introduction

On April 2 2013, I received an email from TopCoder announcing a challenge by NASA to implement an image recognition algorithm for their robot Robonaut 2.

Here is the small teaser NASA published on youtube:

The Robonaut Challenge is part of the ISS Challenge Series organised by NASA in order to solve some issues related to the International Space Station. This challenge series is separated into three main challenges:

- The Longeron challenge, which goal was to optimize the power production of the ISS when placed on difficult orbital positions.

- The ISS-FIT challenge, which purpose was to create an iPad Application for monitoring the astronauts food intake.

- And the Robonaut Challenge, which can be separated into two smaller challenges :

- The first one is to teach Robonaut 2 (an humanoïd robot created by Nasa) how to recognize state and location of several buttons and switches on a taskboard.

- The second one is the use this vision algorithm to operate on this taskboard.

I will try to summarize the problem, but if you want to learn more on the subject, I suggest you to take a look at the Problem Statement.

Being a big fan of space related themes and as I was familiarized with TopCoder marathon challenge, I decided to dive in.

Problem overview

Robonaut 2 is the first humanoid robot in space and it was sent to the space station with the intention of taking over tasks too dangerous or too mundane for astronauts. But Robonaut 2 needs to learn how to interact with the types of input devices the astronauts use on the space station. And it has to recognize the current state of the taskboards before it can operate on them ; which buttons are already pushed, which leds are active, is the power switch flipped, and so on.

This is the purpose of the challenge. In order to do so, a several pictures of a unique taskboard is provided. These pictures are separated in 5 subsets:

- ISS: real pictures of the taskboard taken on the International Space Station.

- Lab, Lab2 and Lab3: real pictures of the taskboard taken on earth on different positions. Lab pictures are more are similar to the ISS pictures than Lab3.

- Sim: images generated by a 3d simulation of the taskboard.

A strong performance on the real imagery is preferable over simulated imagery. Therefore, the score coefficient of the ISS pictures are more important than the Lab pictures, which is more important than the Lab2, and so on.

Simulated image of the taskboard

Real picture taken on the ISS

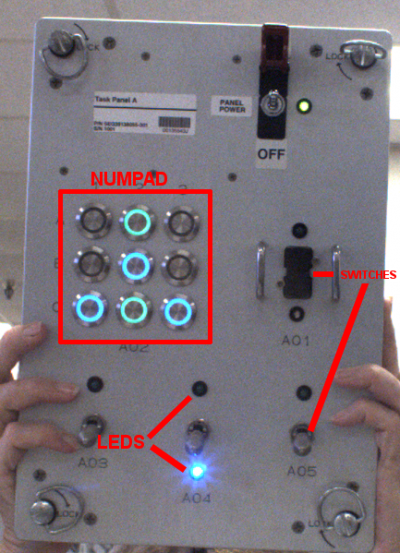

On the taskboard, the following items have to be recognized :

- Led positions and states (ON/OFF).

- Numpad buttons positions and state (ON/OFF).

- Switches positions and states state (UP/DOWN and CENTER for some)

Illustrations of numpad buttons, leds and switches on a Lab3 picture

Technologies

As I won the contest, my solution was sold to NASA so I am unable to publicly give technical details about the solution. Here are technologies I have used :

- The program was written in C++ and used the Qt framework

- I used Qt for the user interface (windows, image display / load / save)

- I used the Template Numerical Toolkit library for matrix manipulations

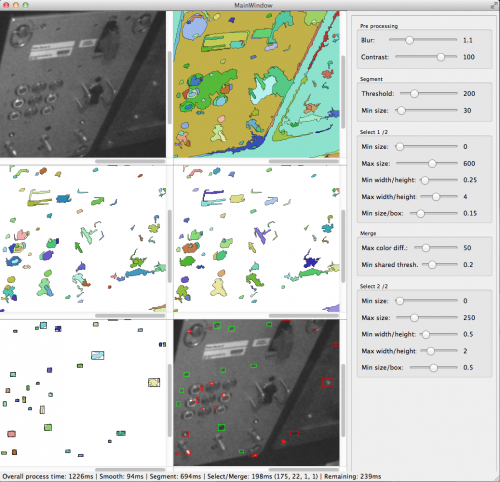

- I used the Efficient Graph-Based Image Segmentation algorithm by Pedro F. Felzenszwalb and Daniel P. Huttenlocher

- Principal Composent Analysis

- Neural networks

- Image segmentation

- Homography

The submitted source code can though be found on the official website. Please note that we had to send the source code in one unique file, so it might be difficult to read (I wrote my submission into many files and used a PHP script to compress them into one file).

Small snapshot on how the Efficient Graph-Based Image Segmentation and its components preselection were calibrated

Results

My submission had an overall 89% success rate. The algorithm was able to detect correct states and elements positions even when some parts of the taskboard were not visible. It took generally 3 to 5 seconds to detect all buttons / leds / switches.

Result sample

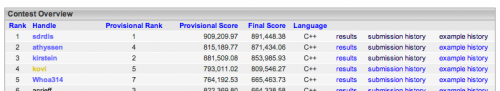

The contest had orginally a length of 3 weeks, but an other contest extended it for one more week. On the first contest, I ended up in the 2nd place, and on the second contest, after working on the algorithm performance, I ended up in the 1st place. Official standing pages can be found here, here, and here.